Trust Is All You Need

What 130+ agricultural leaders told us about how AI actually gets adopted and why the model is almost never the problem.

Confidence --> Trust --> Adoption --> Repetition!

The One-Sentence Finding

On March 16, a group of roughly 130+ people sat down together for 160 minutes (~346hrs, thats lot of time, must be important) at World Agri-Tech San Francisco to answer a specific question: what does AI progress actually look like in agriculture? and How do we benchmark it for accuracy, effectiveness, and most importantly impact? (Models are interesting but outcomes are better.)

We had builders in the room. We had extension agents, academic researchers, the agronomy teams of major input companies, founders and even some growers. Who spanned geographies, activities, crops, and resources.

At the end of the session, one finding united the room across every segment, every context, every stakeholder type:

"Trust is the rate-limiting factor for AI adoption in agriculture. Not model quality. Not data volume. Not technical sophistication. Trust."

The article shares our findings, what we heard, what it means for anyone building or deploying AI in agriculture, and the framework we are proposing for doing the work.

Why Trust Is the Central Variable

Often when discussing AI adoption, cost is cited, the state of the capability, or organizational change management. However in an industry where one bad decision can mean one catastrophic year, that is emotional and intimate like agriculture, the gating factor is different: it is whether a Farmer, an Agronomist, an Advisor, a Lender can mitigate the risk an action, a recommendation, a selection can pose by having the confidence in the system enough to bet a season on it.

This is not a feeling. It is a mathematical reality of how agriculture operates. Often cited a row-crop farmer in the U.S. Midwest roughly has 40 harvests in a working career. A smallholder grower in whose family eats what they harvest, has even less margin for a bad season. The asymmetric stakes between AI developers (who iterate weekly) and AI users (who iterate annually) shape every decision about whether to trust a recommendation.

Trust in agricultural AI is not built the way trust is built in a consumer SaaS product. It is built over multiple seasons, validated against real outcomes, and the part builders most often miss, mediated through existing human relationships. The Agronomist, the Ag Retailer, the Extension Agent, the Neighbor. These are not obstacles to AI adoption. They are the trust infrastructure AI has to plug into.

- Trust is longitudinal, not transactional. One good demo does not produce trust. Neither does one good season. Trust is built brick by brick over multiple seasons, and can come apart all at once.

- The stakes are asymmetric. The developer of an AI recommendation system can ship a buggy release on a Tuesday and fix it on a Wednesday. The farmer who acted on that recommendation has committed seed, inputs, and fuel. The feedback loop is a year long, and the cost of a bad call is the season.

- Trust flows through existing relationships. Farmers trust farmers. They trust the agronomist they have worked with for a decade. AI that tries to bypass these relationships loses. AI that strengthens them wins.

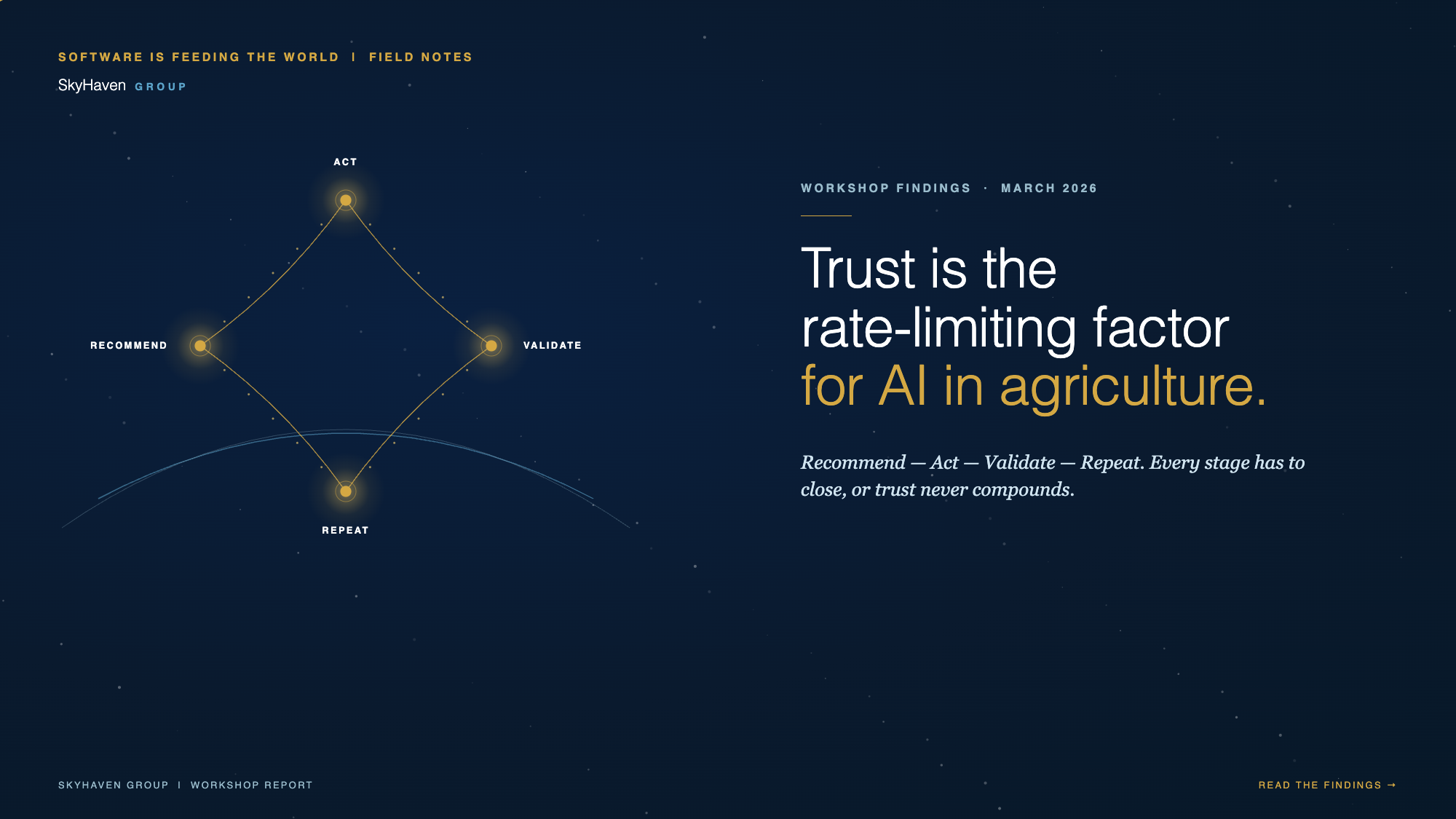

The Trust Loop

A phrase from the workshop that has stuck with us: “recommendations to repeatable actions.”

What it describes is the loop by which trust in an AI system gets built over time. The AI recommends. The human acts. The outcome validates (or does not). The system learns from that outcome, and the next recommendation is a little better calibrated than the last. Over enough cycles, the human stops second-guessing every recommendation. That is trust, mechanically defined.

“Recommend → Act → Validate → Repeat. That is the loop. Every stage has to be measurable, and every stage has to close, or trust never compounds.”

Most agricultural software only measures its own first step: recommendations produced and accepted. Very few close the loop on what happened. Did yield improve? Did margin grow? Did the grower come back? Systems need to be measured by farmer outcomes, not product engagement.

This is where AI benchmarking needs to grow up. Are these models accurate, do they create value, and do they move the grower closer to their goal? Measuring that season after season is how trust compounds. Without it, AI in agriculture stays stuck in pilots.

Why There Is No Universal Benchmark

A second finding from the workshop that has consequences for every vendor in the market: agriculture is not one market. It is dozens of micro-markets with different risk profiles, data environments, and trust structures.

A large row-crop operation in Iowa running a tractor with a cab that works with an agronomist whose worked with them for 20 years is a different adoption environment than a 2-hectare cassava plot in Tanzania where the farmer’s only source of advisory is a government extension agent with a 1:6,000 ratio. Both are “agriculture.” Neither is the other. A benchmark that treats them the same is worse than no benchmark at all, because it produces false confidence.

This is why the workshop ended with a call for a modular, context-aware benchmarking framework rather than a single score. What is being evaluated? For whom? Under what assumptions? The benchmark has to answer those questions first, and the framework has to be composable so different contexts can share comparable-but-not-identical measurements. We need a set of heuristics that create a set of approaches that introduce consistency with flexibility.

- The Global North / Global South divide. Large commercial operations often have rich data and established advisory relationships. Smallholder farmers often have neither, and may be using a phone chat powered by AI as their first touchpoint with formal agronomic guidance in any form. The evaluation framework has to accommodate both, separately.

- Hyperlocalization is a prerequisite. Language, crop type, climate zone, economic context. These are not features to add later. They are conditions for the system working at all. A benchmark that scores an AI system high in English and low in Swahili is measuring deployment, not capability.

- Agentic AI requires a higher bar. As Ag AI moves from recommendation to autonomous action, prescription dosing, autonomous spraying, autonomous equipment. The accuracy threshold must rise substantially. Human-in-the-loop remains essential when consequences are irreversible.

The Data Gap That Nobody Wants to Name

The third big finding from the workshop: the data gap in Ag AI is not a data-collection gap. It is a data-curation, data-quality, and data-trust gap. The hardest part of the gap is the part nobody wants to talk about, which is that some farmers actively distrust data sharing.

There are rational reasons for this. Farmers have seen data they shared be used to inform pricing against them, to enforce compliance in ways they did not expect, and to enable competitors to reverse-engineer their operations. In some regions, farmers deliberately introduce noise into shared data because they assume the data will be used against them eventually. This is not paranoia. It is a rational response to an ecosystem that in the past has not always established governance norms to protect their data.

Any serious framework for AI benchmarking in Ag has to contend with this. It is not enough to measure whether an AI system produces accurate recommendations. We also have to measure whether the data environment the system operates in has clear provenance, accountable ownership, updatable records, and traceability. Those are the attributes that turn shared data from a liability for the farmer into an asset.

“Small, well-structured, traceable data outperforms massive poorly structured sets. Bad data produces confident wrong answers faster than good data produces correct ones.”

What This Means for What We Build Next

The workshop surfaced three near-term goals for the community:

- Facilitate capacity building and knowledge sharing. The field does not yet have a shared vocabulary for AI capability in agriculture. Programs that help practitioners, founders, and advisors talk about AI capability precisely are a necessary precursor.

- Develop component-level and context-specific benchmarks. Not one score. A set of heuristics and a toolbox of benchmarks(i.e., one for advisory quality in smallholder context, one for agentic prescription in row crop, etc.).

- Assess organizational and data readiness. Before a benchmark can say whether a system works, the organization using it has to be ready to act on the result. Readiness across data governance, operational maturity, and change management is a precondition for any benchmarking effort to produce change rather than just a report.

The Actions That Follow

Three concrete actions came out of the workshop that the group is taking forward:

- Connect to the AI AgriBench Consortium. The Center for Digital Agriculture at the University of Illinois had formed a consortium on AI benchmarking in agriculture, AI Agribench.

- Organize context-specific focus groups. The next phase of the work is digging into specific contexts, in dedicated sessions with representatives across the value chain.

- Explore additional programming with partners. SkyHaven Group, Software is Feeding the World, DeepRoot Strategies, Athena Infonomics, and the Center for Digital Agriculture are each exploring follow-on programming. Join the session on April 30 if you want to be part of that conversation --> LINK.

Why This Matters Now

We cannot miss on the benefits of AI because of the digital hangover, we need to listen to drive adoption and realize the benefit. There is clear pressure on the system with challenge like climate, labor, input costs, etc., that continue to rise. Innovators are building AI enabled tools that can help address those pressures at a faster rate than the trust infrastructure to deploy them, stunting impact and limiting benefit.

This gap won't close on its own. We think it only closes when the people who build, deploy, fund, and use Ag AI align towards a set of principles that become framework that provides consistency and flexibility for what works, under what conditions, for whom. That shared framework is a benchmark. But it has to be a benchmark that speaks the language of the field, not the language of the lab.

That is what need and it is what the April 30 findings session is for, to share the workshop output, take feedback from the wider audience that were in the room.

“Trust is all you need. That is not a slogan. It is what the room told us. And it is what the next phase of AI in agriculture has to organize itself around.”

Join us on April 29

We are hosting a virtual session on Wednesday April 29 to share the full workshop findings and will try to open the floor for discussion. The session is free and intended for business and technology leaders in AgTech who are working on or thinking about AI initiatives. Registration link in the first comment.